3 Ways the JVM Makes Your Life Easier

The Java programming language has been around for over 25 years now – quite a long time considering the youthfulness of the information age. During this time, it has been used by millions of developers and many of the largest companies in the software industry. Even today it consistently ranks in the top three most popular programming languages. So why is it so popular and why has it managed to last the test of time, whereas other languages have come and gone? There are likely many reasons for the success of Java, but one major contributor is certainly the Java Virtual Machine (JVM).

The JVM is a remarkable piece of software, responsible for a whole range of things which make the life of a developer easier. The creator of Java, James Gosling said “Java is a blue collar language. It’s not PhD thesis material, but a language for a job”. The JVM is designed with exactly this vision in mind; it allows you to focus more on the complexities of designing software such as understanding new domains, as well as writing maintainable and human readable code, while the JVM handles many of the most time consuming aspects of programming like allocating and deallocating memory. So without further ado, read on to see the 3 main ways the JVM makes your life easier and enables you to spend more time making excellent software.

1. Write once, run anywhere

Write once, run anywhere was a phrase coined by Sun Microsystems promoting the fact that Java code could be compiled once and then run on different platforms with no change required to the code itself. The usefulness of this is less apparent today than it was, however. Today many applications are run in a container which contains its own operating system and so don’t need to consider, to the same extent, the intricacies of shipping to various platforms. Regardless, it’s useful at demonstrating one of the most useful design features of Java - the decoupling of the code from the runtime.

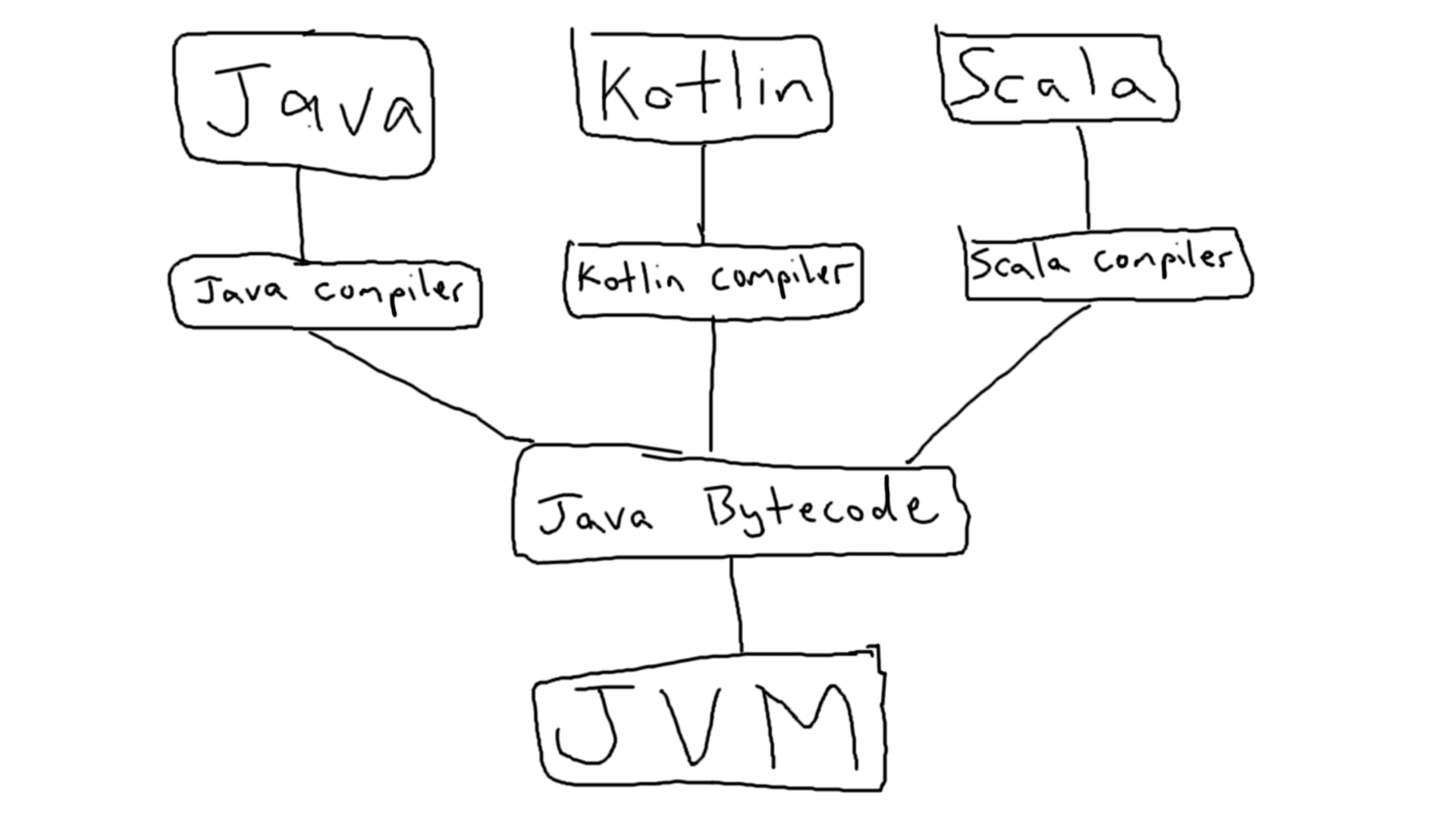

To explain this, consider a compiled language like C++ where the compiler turns the code you write into machine code which is then executed, or an interpreted language like Python where your code is executed line by line by the interpreter. Java on the other hand, is first compiled into an intermediary language Java Bytecode, and then run line by line by the JVM. This intermediate step means that the language itself can be swapped out for any other that compiles to Java bytecode (ie Kotlin, Scala etc) and the JVM will happily run it.

JVM languages compile to Java bytecode which is then run by the JVM.

Being therefore both a compiled and an interpreted language, Java (we will from here on talk about Java, but as we’ve discussed, any JVM language can be substituted here) benefits from a compiler which can do useful things like identifying bugs at compile time and enforcing correctness of types, as well as an interpreter which allows it to be platform independent amongst other powerful features like reflection.

2. Automatic Memory Management

The allocation and deallocation of memory is a fundamental part of software as all programs must store values, however small or short-lived they might be. In languages like C++ this is controlled manually by the developer, whereas in Java this is handled by the JVM. Thanks to the JVM, the Java developer does not need to worry about forgetting to deallocate and causing a memory leak or other such manual memory management woes.

This memory management system works by requesting upfront a portion of the system’s memory which the JVM then has free rein over for the lifetime of the program. This part of memory is called the heap. As well as allocating new objects to the heap, the JVM is responsible for clearing out old objects to make room for new ones. This clearing process (called garbage collection) is a very complex area of computer science, but at its core aims to identify dead objects and remove them with minimal disturbance to the program running. Disturbance could look like long pauses, high CPU usage or reduced throughput while garbage collection is running.

The great difficulty with garbage collection and the reason why the disturbances still occur despite every attempt to minimise them, is that the algorithm used to collect garbage must never remove a live object, as this would have a very bad effect on the software running. To avoid this, dramatic measures must be taken such as pausing the entire application momentarily (referred to as “Stop the World”) at certain stages of collection. Even then, this is not as simple as simply freezing the program instantly; the JVM signals to all application threads they need to stop. If they are in interpreted mode this is simple, but if the thread is running native code (more on this later!), it needs to wait until the next safe point is reached before it can be stopped and allow garbage collection to continue. So clearly the issue of garbage collection is far from trivial, but the JVM makes the most of several decades of research and development into ensuring that you don’t need to worry about it.

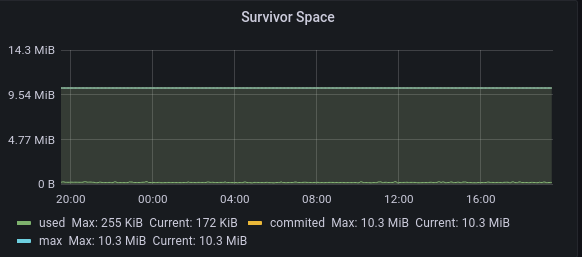

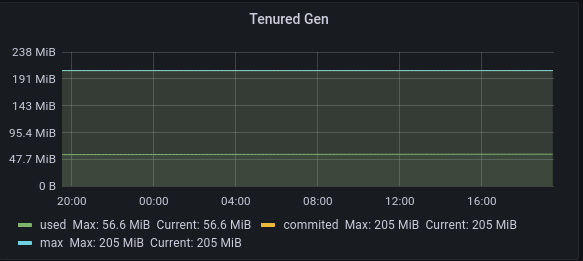

The JVM (some JVM’s and garbage collection subsystems vary, but for the purpose of this post, we’ll be referring to Hotspot JVM) approaches the matter of memory management following the Weak Generational Hypothesis. This states that the vast majority of objects are short-lived, and the majority of those that aren’t will last forever. To exploit this, the JVM splits the heap into different spaces - Eden, Survivor, and Tenured. Eden space is where new objects are allocated and is the largest area. Survivor space is the smallest and holds objects which have survived garbage collection in Eden. Tenured space holds objects which have survived several garbage collections and is ideally full of objects which are long lived and don’t need removing any time soon. The idea is that garbage collection should happen in the Eden space frequently and only rarely should a full garbage collection of the entire heap (Eden + Survivor + Tenured) be necessary. The benefit of this is that the frequent small collections happening in Eden will cause minimal disturbance to the application, whereas the full garbage collection (which is where the long pauses will occur) can be avoided where possible. This of course is the ideal and is not always achievable, but for the majority of use cases, the JVM intelligently configures the garbage collector to perform optimally in this manner.

Garbage collection ideally happens most frequently in Eden space…

…less frequently in Survivor space…

…and least frequently in Tenured space (full garbage collection).

Although automatic memory management is a complex and difficult problem, the JVM does an excellent job of it and frees you up to focus on other parts of your software.

3. Profile Guided Optimisation

One of the drawbacks of interpreted code is worse performance than code compiled to machine code, simply due to the overhead of the interpreter needing to execute each line, rather than the processor executing the code directly itself. The JVM remedies this by employing profile guided optimisation to compile “hot” parts of the code to highly optimised machine code which can then be executed directly rather than needing to be interpreted. This process of swapping out interpreted code for machine code at runtime is called Just in Time compilation (JIT).

Because compiling code while the program is running is expensive, the JVM records information about the application - in particular what paths are frequently used and then prioritises which of them to compile. This identifying of “hot spots” is where the HotSpot JVM gets its name. JIT compilation of hot paths can give a massive performance boost and produce code which executes as fast (or even faster in some cases) than compiled languages like C++. To justify this statement, as I can almost already hear the anguish of C++ developers, the reason why the JIT compiler could optimise Java code to be faster than what the C++ compiler produces is due to the dynamic nature of the JVM. The JVM can identify exactly which CPU it is running on and potentially optimise using processor features specific to that CPU, whereas the C++ compiler needs to be more conservative in its optimisations not knowing the targeted system in this detail. The consequence of making an optimisation for a feature that doesn’t exist is an application that simply does not run at all, and it certainly won’t be winning any benchmarks like that.

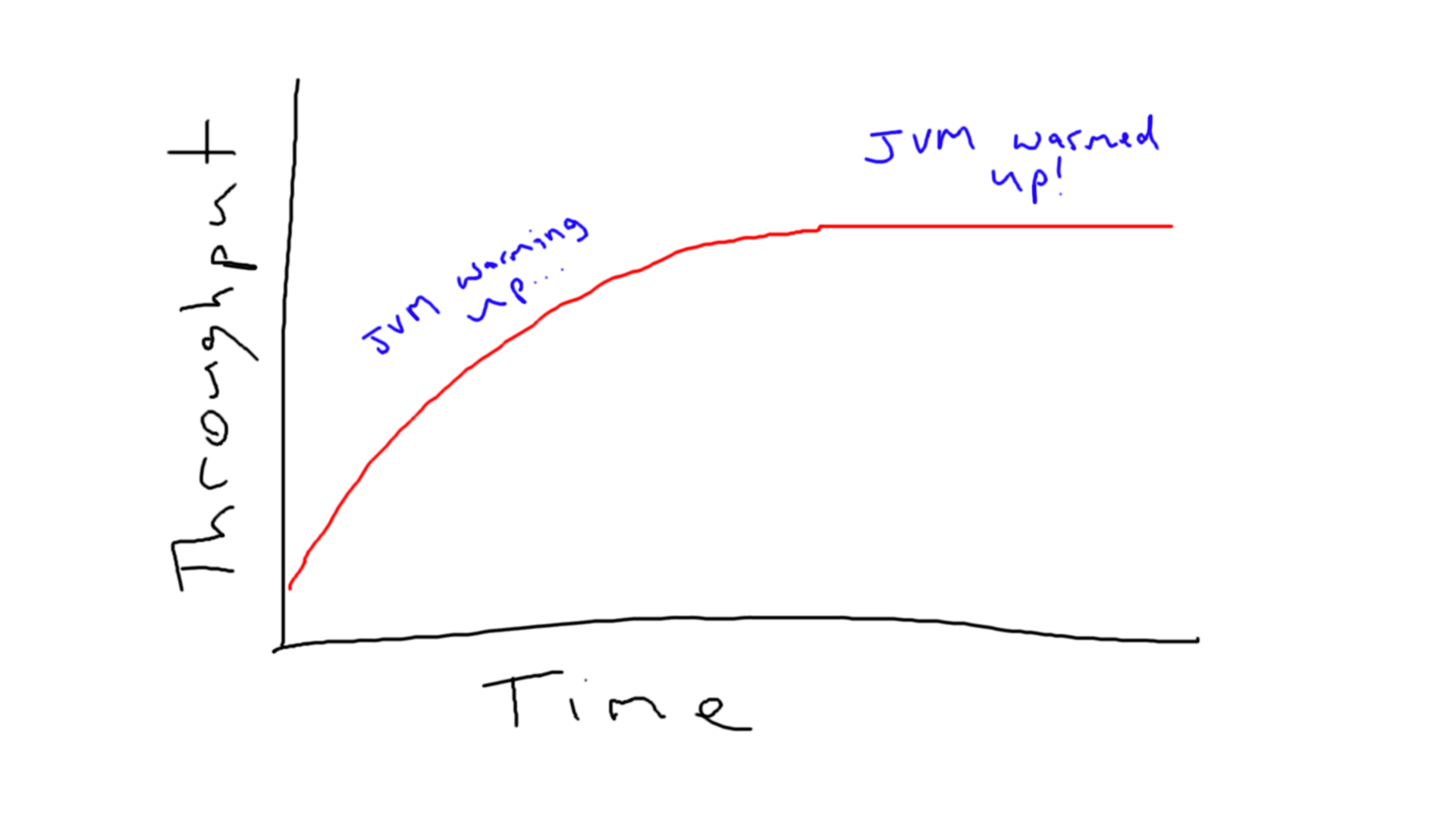

A drawback to profile guided optimisation and JIT compilation is the initial period of the application’s life where the JVM is still collecting information on hot spots and has not compiled any byte code into optimised machine code. This is referred to as JVM warmup and results in poor performance until JIT begins to kick in. JVM warmup can be a problem in services that don’t last for very long, scale often, or where low response times are critical. Some JVM implementations like OpenJ9 offer Ahead of Time compilation (compiling up front like what C++ does). Offloading the JIT compilation to a separate JIT server is also being experimented with to increase the performance during the JVM warmup period.

Until the JVM has completed its JIT compilation, it is said to be warming up.

For the majority of cases though, JIT compilation works perfectly fine and allows the JVM to optimise your code where it matters, and again, allows you to spend more time elsewhere.

So to wrap it up, if you’re wanting to develop clean, maintainable, yet still performant code, the JVM will help you do it. With awesome features like write once, run anywhere, automatic memory management, and profile guided optimisation, you’ve got no excuse for writing ugly code!

Further Reading

I highly recommend Optimizing Java by Benjamin J. Evans, James Gough, and Chris Newland for an excellent overview of the JVM and its many features. It’s written in such a way that it’s easy to follow, yet doesn’t skimp on the details. Definitely one of my favourite programming related books! O’Reilly Link.